Why behind AI: Skill issue

This week I'll take a look at a recent interview with Andrej Karpathy for the VC-oriented podcast No Priors. Andrej is one of the most interesting players in AI, both for introducing new concepts to the public (vibe coding, software 3.0) and for often taking contrarian takes mostly rooted in practical observations.

“In December is when it really just something flipped where I kind of went from 80/20 of like writing code by myself versus just delegating to agents. And I don’t even think it’s 20/80 by now. I think it’s a lot more than that. I don’t think I’ve typed like a line of code probably since December basically.”

“Literally like if you just find a random software engineer at their desk and what they’re doing, like their default workflow of building software is completely different as of basically December.”

“Everything just like happens in these macro actions over your repository. It’s not just like here’s a line of code, here’s a new function. It’s like here’s a new functionality and delegate it to agent one. Here’s a new functionality that’s not going to interfere with the other one. Give it to two.”

“It all kind of feels like skill issue when it doesn’t work... What is your token throughput and what token throughput do you command?”

“I actually kind of experienced this when I was a PhD student. You would feel nervous when your GPUs are not running. But now it’s not about flops, it’s about tokens.”

The "coding is changing" meme is one of the hardest trends to really capture accurately due to how many engineers refuse to use AI tools due to politics/personal preferences, while many have fully moved to AI-only workflows. What I do know is that Anthropic did $6B of revenue in February, which had twenty-eight days. As the old saying goes, when money talks, bullshit walks.

“I used to use like six apps, completely different apps and I don’t have to use these apps anymore. Dobby controls everything in natural language.”

“These apps that are in the app store for using these smart home devices, these shouldn’t even exist kind of in a certain sense. Like shouldn’t it just be APIs and shouldn’t agents be just using it directly?”

“The customer is not the human anymore. It’s like agents who are acting on behalf of humans and this refactoring will probably be substantial.”

“Maybe there’s like an overproduction of lots of custom bespoke apps that shouldn’t exist because agents kind of crumble them up and everything should be a lot more just like exposed API endpoints and agents are the glue of the intelligence that actually tool calls all the parts.”

“The industry just has to reconfigure in so many ways... the customer is not the human anymore. It’s like agents who are acting on behalf of humans and this refactoring will be will probably be substantial in a certain sense.”

The most obvious future of software will be a lot of custom software that everybody will generate for themselves, powered by paid APIs. Enterprise will slowly retreat out of UXs towards APIs, eliminating a significant amount of go-to-market headcount in the process. The counterargument on this thesis is that enterprise software is about resilience and accountability, but I struggle to see what's difficult in simply giving you all the logs needed for observability, security analytics and compliance in a separate API as a service or for you to track these yourself within your primary system of action.

“The name of the game now is to increase your leverage. I put in just very few tokens just once in a while and a huge amount of stuff happens on my behalf.”

“I let auto research go for like overnight and it came back with tunings that I didn’t see. I did forget like the weight decay on the value embeddings and my Adam betas were not sufficiently tuned.”

“A research organization is a set of markdown files that describe all the roles and how the whole thing connects. And you can imagine having a better research organization. So maybe they do fewer stand-ups in the morning because they’re useless. And this is all just code.”

“The LLM part is now taken for granted. The agent part is now taken for granted. Now the claw-like entities are taken for granted and now you can have multiple of them and now you can have instructions to them and now you can have optimization over the instructions.”

“A swarm of agents on the internet could collaborate to improve LLMs and could potentially even like run circles around Frontier Labs. Like, who knows? Frontier Labs have a huge amount of trusted compute, but the Earth is much bigger and has huge amount of untrusted compute.”

If we extrapolate this argument towards Enterprise, in a way you can look at every org as a combination of .md files. HR policies, order-to-cash workflows, customer support, it's all mapped out in existing documentation and tools.

If everything can be mapped out in simple instructions for agents, the obvious future is one where many of these are shared or enriched externally. This would break many of the fundamental workflows that we have today, which often center around paying individuals for basic organizational domain knowledge.

“The closed models are ahead but people are monitoring the number of months that open source models are behind. It started with there’s nothing and then it went to 18 months and now it’s convergence. Maybe they’re behind by like six months, eight months.”

“Centralization has a very poor track record in my view. I want there to be a thing that’s behind and that is kind of like a common working space for intelligences that the entire industry has access to.”

“I think basically by accident we’re actually in an okay spot.”

“Even on the closed side I almost feel like it’s been even further centralizing recently because a lot of the front runners are not necessarily like the top tier. I almost wish there were more labs.”

“In machine learning, ensembles always outperform any individual model and so I want there to be ensembles of people thinking about all the hardest problems.”

“There is a need in the industry to have a common open platform that everyone feels sort of safe using... the big difference is that everything is capital. There’s a lot of capex that goes into this. So I think that’s where things fall apart a little bit, make it a bit harder to compete.”

The "open source models are close in performance" storyline hasn't been true for a while and I don't really see it converging anytime soon. There are two primary issues with it: a) Cost-to-value b) Alternative use of the same time

On the first point, the allure of open-source is that you can run a frontier level model on a personal device. This is mostly not true, because you need a lot of RAM and this is either not available on consumer devices, or requires a hardware investment of $15k+ to "daisychain" Mac Studios. This leads to both much slower performance vs API access, as well as a very cost-inefficient use of the $15k (which would cover years of cloud inference). So the reality is that local models are mostly run on smaller quantized versions, where performance degrades significantly.

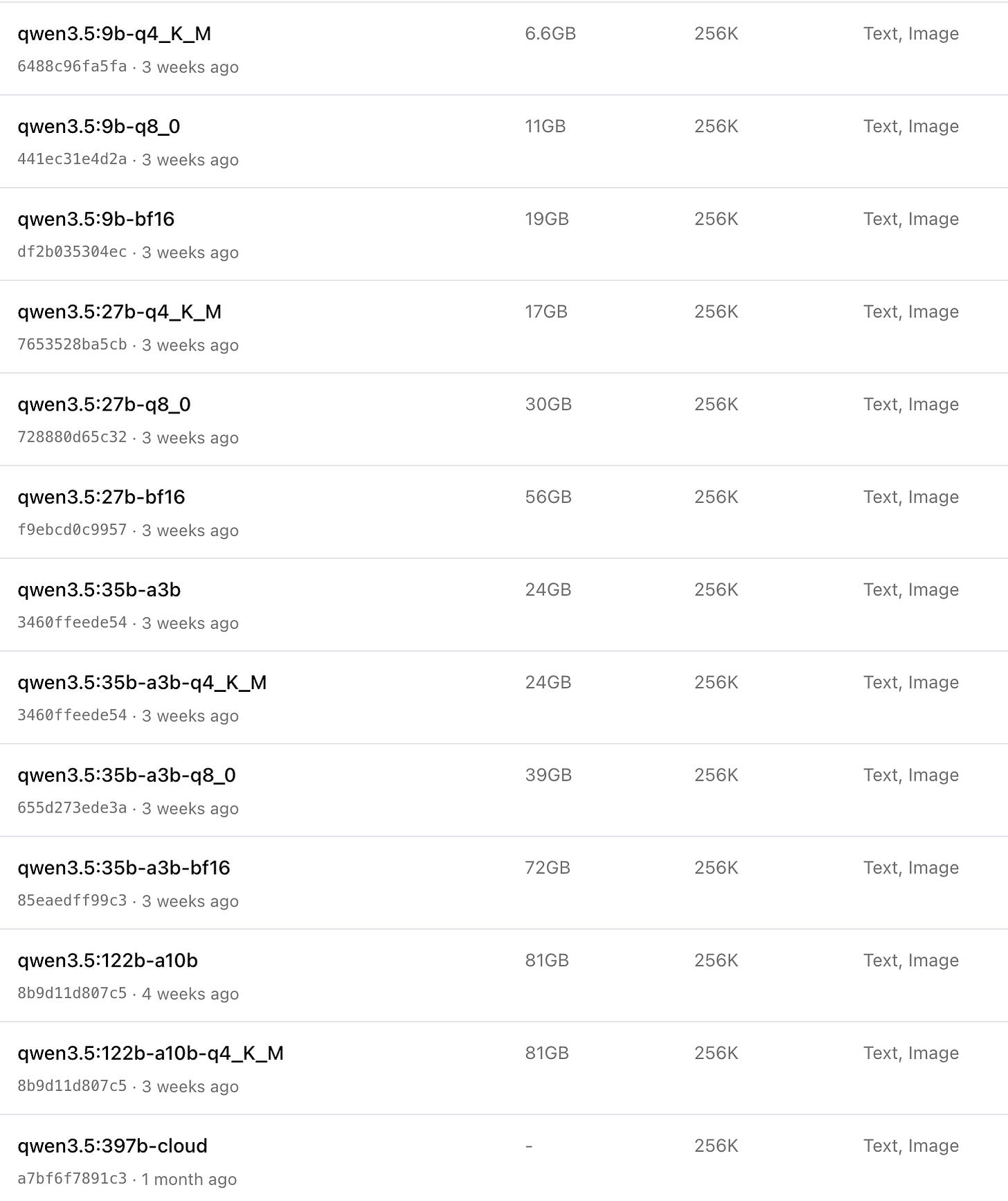

The full-size Qwen 3.5 model (which remains significantly behind actual frontier models) would be best served via an API (with a smaller context window than the 1M from GPT 5.4 or Claude Opus/Sonnet). Everything else is multiple smaller variations, many of them forked by third parties and offering a varying degree of degradation. In order for you to run the biggest of these at 81GB, you will need a Mac Studio with 128GB of RAM which will cost you around $4K.

Alternatively you can just pay for almost 2 years of the most expensive tiers that Anthropic or OpenAI offer you, with all the tools and benefits those subscriptions come with. The odds of being significantly more productive vs using a subpar homelab implementation are very high from my point of view.

“There’s currently kind of like an overhang where there can be a lot of unhobbling almost potentially of a lot of digital information processing that used to be done by computers and people and now with AI as a third kind of manipulator of digital information. There’s going to be a lot of refactoring in those disciplines.”

“The physical world is actually going to be like behind that by some amount of time.”

“The total addressable market in terms of the amount of work in the physical world is massive, possibly even much larger than what can happen in digital space. But atoms are just like a million times harder.”

“Right now this digital is like my main interest, then interfaces would be like after that, and then maybe like some of the physical things... their time will come and they’ll be huge when they do come.”

“At some point you have to go to the universe and you have to ask it questions. You have to run an experiment and see what the universe tells you to get back to learn something.”

“We’re going to start running out of stuff that is actually already uploaded. So you’re going to at some point read all the papers and process them and have some ideas about what to try.”

Part of the reason why software valuations are getting slashed in the market is because it should be obvious by now that the most interesting outcomes that software can achieve are tied to solving hardware problems. Right now we are simply seeing AI finalizing the digitization problem for most companies, with “digital-physical” interfaces being the next wave. Afterwards we move towards a different future, where robotics will drive the conversation, with software being supplemental to those products.

“I feel like a bit more aligned with humanity in a certain sense outside of a frontier lab because I’m not subject to those pressures almost. And I can say whatever I want.”

“Fundamentally at the end of the day like when the stakes are really high, if you’re an employee at an organization I don’t actually know how much sway you’re going to have on the organization and what it’s going to do.”

“If you’re outside of the frontier lab your judgment fundamentally will start to drift because you’re not part of what’s coming down the line.”

“I don’t want it to be like a closed doors with two people or three people. I feel like that’s not a good future.”

The individuals building the most consequential technology are financially incentivized to be bullish about it, and structurally constrained from being fully honest about its limitations or risks.

This asymmetry of information makes it very difficult to understand what’s really going on unless you push yourself into the trenches. Whether it’s building or selling AI, your opinions are irrelevant, until you are working with the technology on daily basis.