Why behind AI: Five layer (NVIDIA) cake

GTC 2026

The original GTC events were called “GPU Technology Conference” and launched back in 2009, with 1,500 attendees. Nowadays, the scope of the event has expanded significantly beyond just GPUs and with a heavily curated attendee list of 30,000.

The market cap of NVIDIA during this period has also changed slightly, from around $10B to over $4.5T. As such, the keynote of this event has grown proportionally in importance. Here is what stood out.

1. $1 Trillion dreams

“Last year, at this time, I said that where I stood at that moment in time, we saw about $500 billion. We saw $500 billion of very high confidence demand and purchase orders for Blackwell and Rubin through 2026. I said that last year. Now I don’t know if you guys feel the same way, but $500 billion is an enormous amount of revenue. Not one impressed. I know why you’re not impressed because all of you had record years.

Well, I’m here to tell you that right now, where I stand, a few short months after GTC D.C., 1 year after last GTC, right here where I stand, I see through 2027, at least $1 trillion. Now does it make any sense? And that’s what I’m going to spend the rest of the time talking about. In fact, we are going to be short. I am certain computing demand will be much higher than that.”

It’s quite an indication of the state of the market, that the CEO of the largest company in the world claims that his upcoming backlog of compute will double in size to $1T and the stock stayed flat.

The biggest indication of whether or not the AI buildout will continue is the scaling of the latest NVIDIA architecture across the hyperscalers and the largest consumers of compute. If Jensen is not being misleading (and at this stage the penalty for bad behavior would be significant), it’s fair to say that things are accelerating, not stagnating.

2. Token factories go brrrrrr

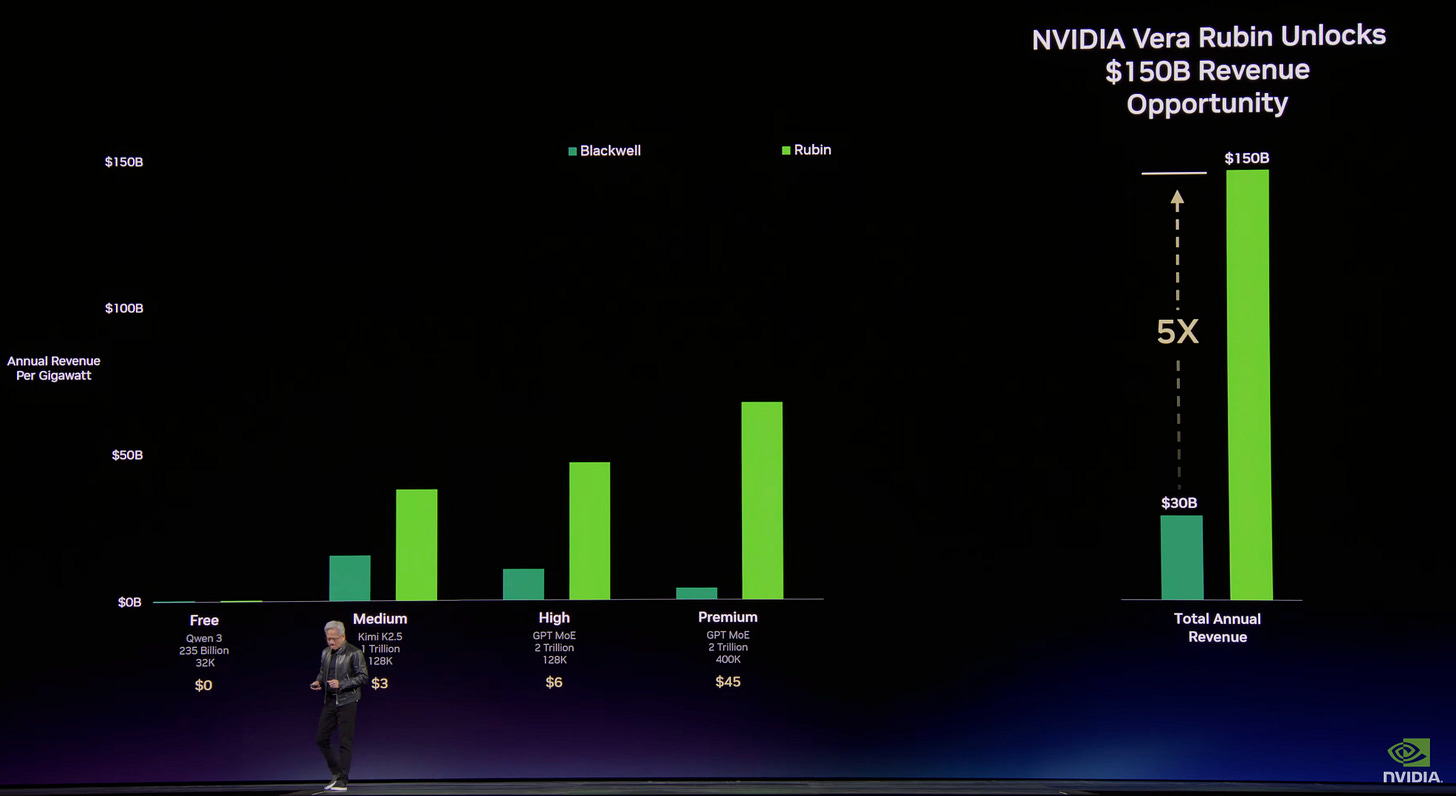

“Tokens are the new commodity. And like all commodities, once it reaches an inflection, once it becomes mature or becomes maturing, it will segment into different parts. The high throughput, low speed could be used for the free tier. The next tier could be the medium tier, larger model maybe, higher speed for sure, larger input context length. That translates to a different price point. You could see from all the different services, this one is free. It’s a free tier. The first tier could be $3 per million tokens. The next tier could be $6 per million tokens. You would like to be able to keep pushing this boundary because the larger the model, smarter, the more input token context length, more relevant, the higher the speed — the more you can think and iterate smarter AI models.

So this is about smarter AI models. And when you have smarter AI models, each one of these clicks allows you to increase the price. So this is $45. And maybe one day, there’ll be a premium model that allows you a premium service that allows you to generate token speeds that are incredibly high because you’re in a critical path or maybe you’re doing really long research and $150 per million tokens is just not a thing. Suppose you were to use 50 million tokens per day as a researcher at $150 per million tokens. As it turns out, as a research team, that’s not even a thing.”

One of the memes around the AI buildout is that "the cost of LLMs is going close to zero and none of the frontier labs can survive." Besides the obvious hole in this argument as seen by OpenAI and Anthropic going over $20B ARR each by the end of Q1, what we are seeing is the debut of more expensive inference costs, not discounts. The frontier labs are charging premiums for expanded context windows, more capable models, and faster token generation, almost always via APIs. The demand for this is clearly there, hence the recent pivot from OpenAI toward Enterprise over their consumer efforts.

3. Vera Rubin + Groq

“The reason why Groq was so attractive to me is because their computing system, a deterministic data flow processor, it is statically compiled. It is compiler scheduled, meaning the compiler figures out when do the compute — the compute and the data arrives at the same time. All of that is done statically in advance and scheduled completely in software. There’s no dynamic scheduling. The architecture is designed with massive amounts of SRAM, it is designed just for inference, this one workload... if you extended this chart way out here and you said you wanted to have services that delivers not 400 tokens per second, but 1,000 tokens per second, all of a sudden, NVLink 72 runs out of steam and it simply can’t get there. We just don’t have enough bandwidth. And so this is where Groq comes in... If most of your workload is high throughput, I would stick with just 100% Vera Rubin. If a lot of your workload wants to be coding and very high-value engineering token generation, I would add Groq to it. I would add Groq to maybe 25% of my total data center. The rest of my data center is all 100% Vera Rubin.”

In one of my first deep dives on NVIDIA, I highlighted that most analysts were being naive about the company’s momentum because they assumed AMD and the hyperscalers can quickly catch up and win over a significant portion of the AI workloads. This would make sense, if we assume that a) NVIDIA stagnates in product development b) Jensen doesn’t flex his significant liquidity.

The Groq acquisition was Jensen’s response to the risk of high-speed token alternatives on the market and his pitch back to the audience makes sense. Adding Groq’s architecture for specific workloads is just another way that customers can increase their ROI from NVIDIA compute.

4. NemoClaw

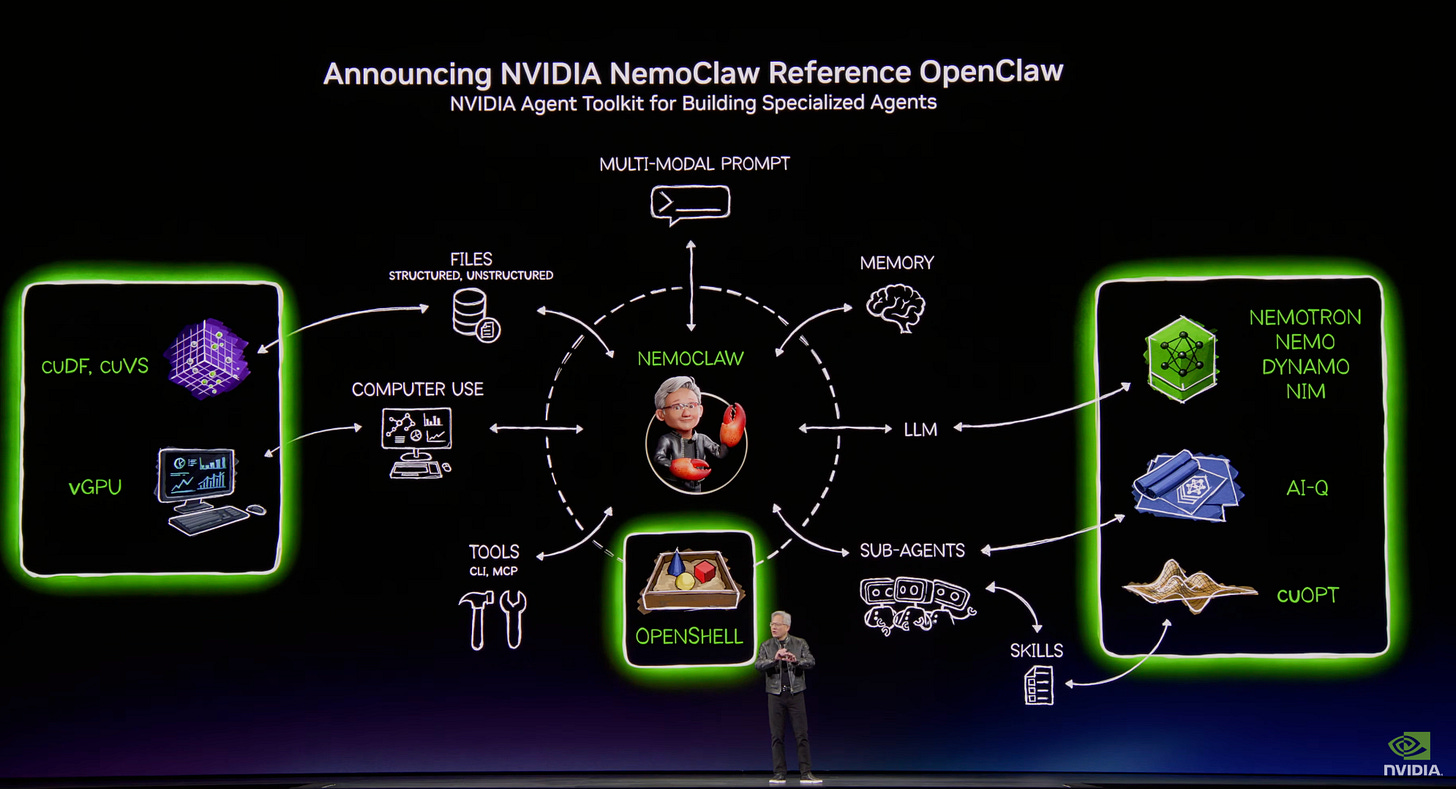

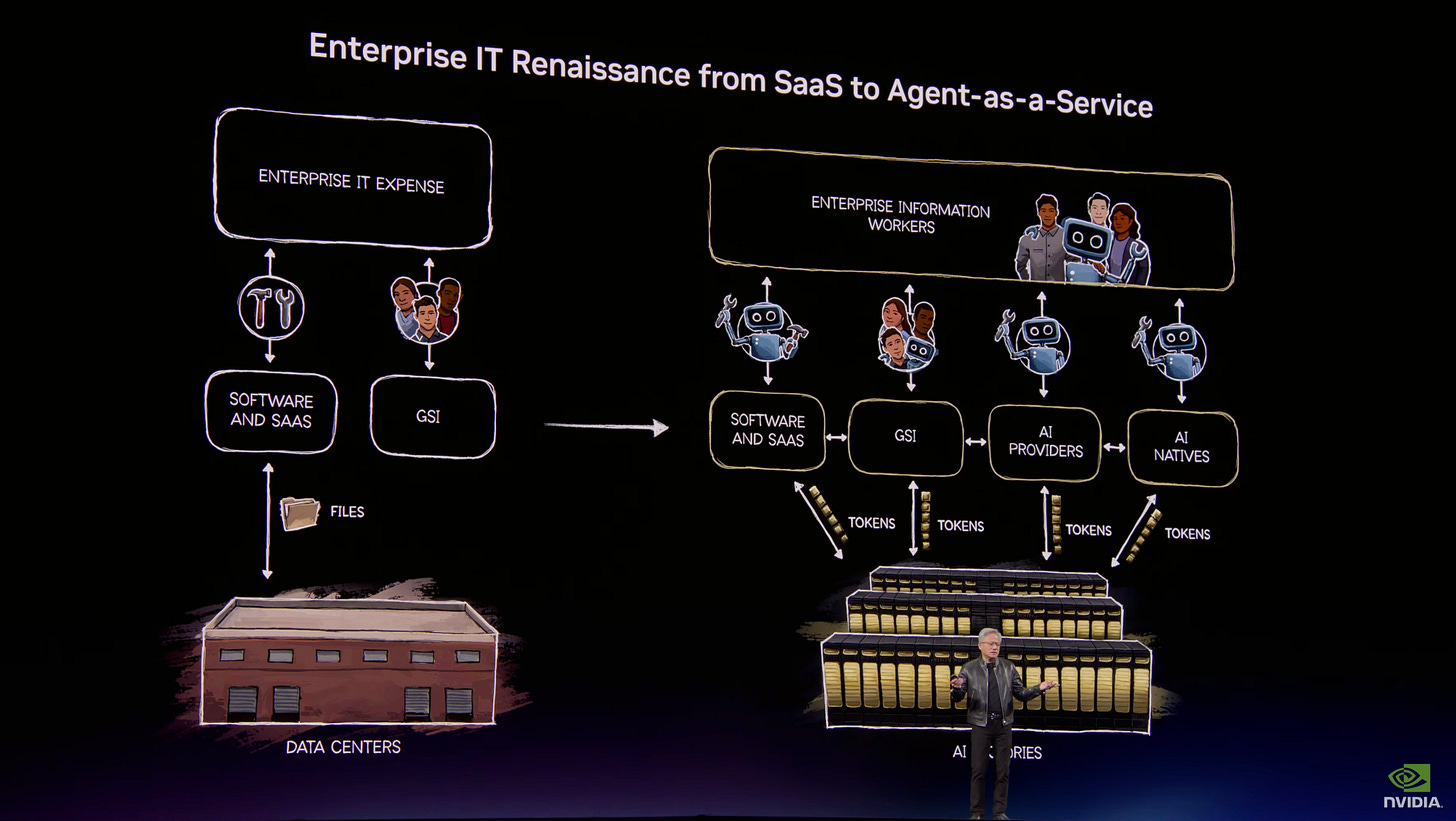

“OpenClaw has open sourced essentially the operating system of agentic computers. It is no different than how Windows made it possible for us to create personal computers. Now OpenClaw has made it possible for us to create personal agents. The implication is incredible... every single company now realize every single company, every single software company, every single technology company for the CEOs, the question is, what’s your OpenClaw strategy? Just as we need to all have a Linux strategy. We all needed to have HTTP, HTML strategy, which started the Internet. We all needed to have a Kubernetes strategy, which made it possible for mobile cloud to happen. Every company in the world today needs to have an OpenClaw strategy and agentic system strategy. This is the new computer... post-Open Claw, post agentic... Every single IT company, every single company, every company, every SaaS company will become a GaaS company. No question about it. Every single SaaS company will becoming a GaaS company and Agentic-as-a-Service company.”

Probably the most interesting announcement was completely out of left field. NVIDIA has invested significant resources in OpenClaw in order to provide an open-source fork that is suitable for Enterprise adoption.

“Agentic systems in the corporate network can have access to sensitive information, it can execute code and it can communicate externally. Just say that out loud, okay? Think about it. Access sensitive information, execute code, communicate externally. You could, of course, access employee information, access supply chain, access finance information and send it out, communicate externally. Obviously, this can’t possibly be allowed.”

The large interest in OpenClaw has clearly made an impression. But is this format (a "main agent" that can do many tasks across multiple systems) impossible to deploy in an Enterprise environment?

“Every single IT company, every single company, every company, every SaaS company will become a GaaS company. No question about it. Every single SaaS company will becoming a GaaS company and Agentic-as-a-Service company... This is enterprise IT before OpenClaw... It would pass through software that has tools and systems of records and all kinds of workflow that’s codified into it, and that turns into tools that humans would use. Digital workers would use. That is the old IT industry, software companies creating tools, saving files and of course, GSIs consultants that help companies figure out how to use these tools and integrate these tools. These tools are incredibly valuable for governance and security and privacy and compliance and all of that continues to be true. It’s just that post-Open Claw, post agentic, this is what it’s going to look like.”

This is a very peculiar play from Jensen, pushing agents further toward Enterprise customers. It's a stark contrast with Anthropic, who sent a cease and desist that forced two rebrands of the project, seemingly with little consideration for the opportunity it represented.

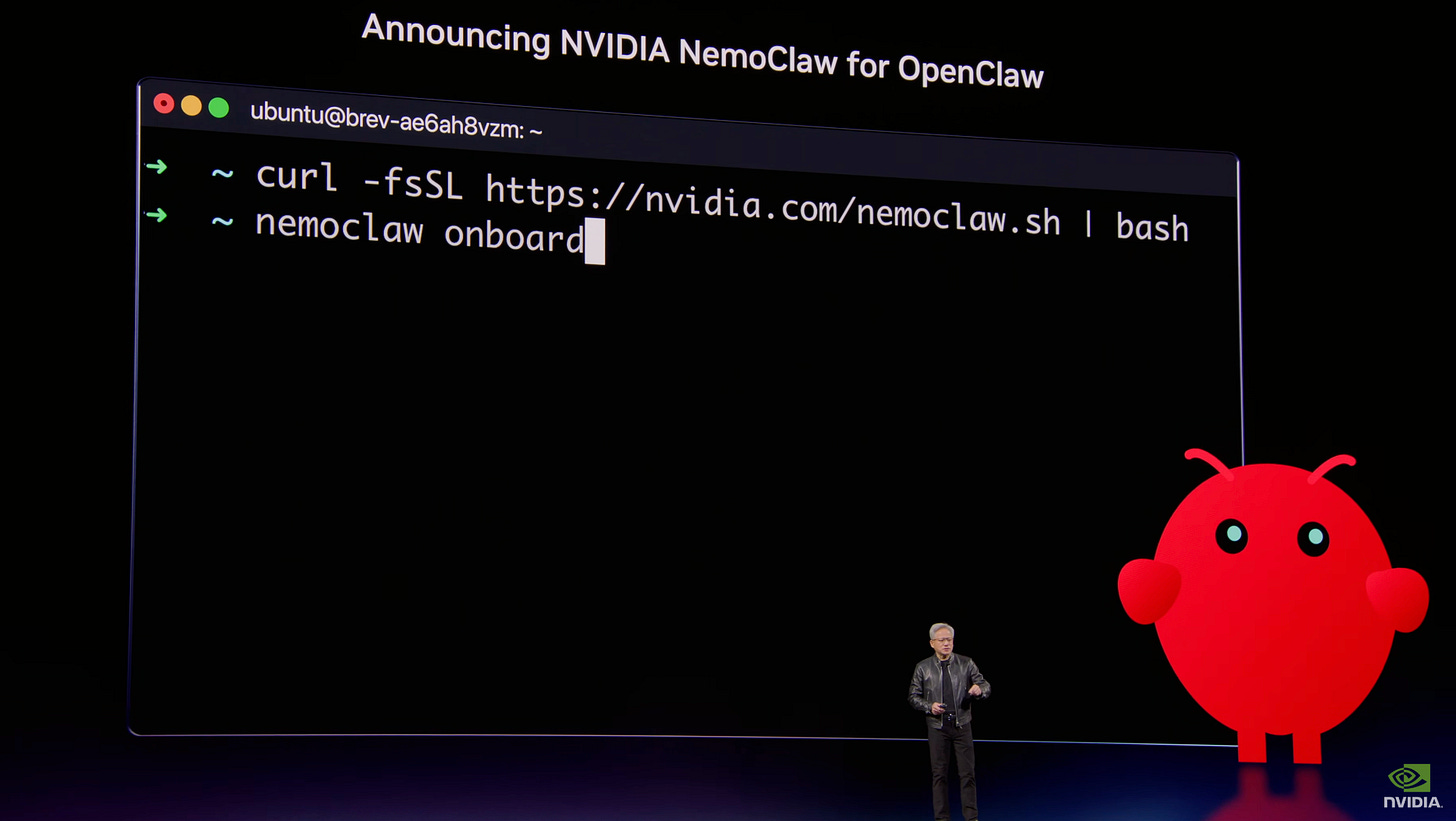

"What we did was we worked with Peter. We took some of the world's best security and computing experts, and we worked with Peter to make OpenClaw, enterprise secure and enterprise private capable. And we call that — this is our NVIDIA OpenClaw reference for Open — NemoClaw, which is a reference for OpenClaw, and it has all these agentic AI toolkits. And the first part of it is technology we call OpenShell that has now been integrated into OpenClaw. Now it's enterprise ready.

This stack with a reference design we call NemoClaw, okay? With a reference stack we call NemoClaw, you could download it, play with it and you could connect to it the policy engine of all of the SaaS companies in the world. And your policy engines are super important, super valuable. So the policy engines could be connected, NemoClaw or OpenClaw with OpenShell would be able to execute that policy engine. It has a network guardrail. It has a privacy router. And as a result, we could protect and keep the claws from executing inside our company and do it safely."

The most important thing about NemoClaw is offering a properly audited and maintained open-source product, in which the massive backlog of exploitable security issues can actually be addressed.

"Today, we're announcing a coalition to partner with us to make Nemotron 4 even more amazing. And that coalition has some amazing companies in it. Black Forest Labs, imaging company; Cursor, the famous coding company, we use lots of it; LangChain, billion downloads for creating custom agents; Mistral... incredible, incredible company. Perplexity, Perplexity computer, absolutely use it. Everybody use it. It is so good, a multimodal agentic system; Reflection; Sarvum from India; Thinking Machine, Mira Murati's lab, incredible companies joining us... I said that every single enterprise company, every single software company in the world needs an agentic systems, need an agent strategy. You need to have an OpenClaw strategy, and they all agree. And they're all partnering with us to integrate NeMo, the NemoClaw reference design, the NVIDIA agentic AI toolkit and of course, all of our open models."

More interestingly, this will not be limited to just forking OpenClaw. NVIDIA is pushing for compatibility with hundreds of providers, curiously including Thinking Machines.

Jensen is pushing close to 1GW of compute over the next 3 years toward Thinking Machines and will partially fund it. As a reminder, the lab recently lost most of its founding team and is yet to deliver an actual product on the market. Why would NVIDIA push a significant investment toward them at this stage, while also doing some peculiar things with OpenClaw and their own family of open-source models called Nemo?

Well, it has something to do with a five-layered cake.

AI is one of the most powerful forces shaping the world today. It is not a clever app or a single model; it is essential infrastructure, like electricity and the internet.

AI runs on real hardware, real energy, and real economics. It takes raw materials and converts them into intelligence at scale. Every company will use it. Every country will build it.

To understand why AI is unfolding this way, it helps to reason from first principles and look at what has fundamentally changed in computing.

From Pre‑Recorded Software to Real‑Time Intelligence

For most of computing history, software was pre‑recorded. Humans described an algorithm. Computers executed it. Data had to be carefully structured, stored into tables, and retrieved through precise queries. SQL became indispensable because it made that world workable.

AI breaks that model.

For the first time, we have a computer that can understand unstructured information. It can see images, read text, hear sound, and understand meaning. It can reason about context and intent. Most importantly, it generates intelligence in real time.

Every response is newly created. Every answer depends on the context you provide. This is not software retrieving stored instructions. This is software reasoning and generating intelligence on demand.

Because intelligence is produced in real time, the entire computing stack beneath it had to be reinvented.

AI as Infrastructure

When you look at AI industrially, it resolves into a five-layer stack.

Energy

At the foundation is energy. Intelligence generated in real time requires power generated in real time. Every token produced is the result of electrons moving, heat being managed, and energy being converted into computation. There is no abstraction layer beneath this. Energy is the first principle of AI infrastructure and the binding constraint on how much intelligence the system can produce.

Chips

Above energy are the chips. These are processors designed to transform energy into computation efficiently at massive scale. AI workloads require enormous parallelism, high-bandwidth memory, and fast interconnects. Progress at the chip layer determines how fast AI can scale and how affordable intelligence becomes.

Infrastructure

Above chips is infrastructure. This includes land, power delivery, cooling, construction, networking, and the systems that orchestrate tens of thousands of processors into one machine. These systems are AI factories. They are not designed to store information. They are designed to manufacture intelligence.

Models

Above infrastructure are the models. AI models understand many kinds of information: language, biology, chemistry, physics, finance, medicine, and the physical world itself. Language models are only one category. Some of the most transformative work is happening in protein AI, chemical AI, physical simulation, robotics, and autonomous systems.

Applications

At the top are applications, where economic value is created. Drug discovery platforms. Industrial robotics. Legal copilots. Self-driving cars. A self-driving car is an AI application embodied in a machine. A humanoid robot is an AI application embodied in a body. Same stack. Different outcomes.

In this essay, NVIDIA is essentially describing the ecosystem of cloud infrastructure software, but reframing it around a "five-layer cake" analogy.

That is the five-layer cake:

Energy → chips → infrastructure → models → applications.

Every successful application pulls on every layer beneath it, all the way down to the power plant that keeps it alive.

We have only just begun this buildout. We are a few hundred billion dollars into it. Trillions of dollars of infrastructure still need to be built.

Directionally correct.

Around the world, we are seeing chip factories, computer assembly plants, and AI factories being constructed at unprecedented scale. This is becoming the largest infrastructure buildout in human history.

The labor required to support this buildout is enormous. AI factories need electricians, plumbers, pipefitters, steelworkers, network technicians, installers, and operators.

These are skilled, well-paid jobs, and they are in short supply. You do not need a PhD in computer science to participate in this transformation.

At the same time, AI is driving productivity across the knowledge economy. Consider radiology. AI now assists with reading scans, but demand for radiologists continues to grow. That is not a paradox.

A radiologist’s purpose is to care for patients. Reading scans is one task along the way. When AI takes on more of the routine work, radiologists can focus on judgment, communication, and care. Hospitals become more productive. They serve more patients. They hire more people.

Productivity creates capacity. Capacity creates growth.

What Changed in the Last Year?

In the past year, AI crossed an important threshold. Models became good enough to be useful at scale. Reasoning improved. Hallucinations dropped. Grounding improved dramatically. For the first time, applications built on AI began generating real economic value.

Applications in drug discovery, logistics, customer service, software development, and manufacturing are already showing strong product-market fit. These applications pull hard on every layer beneath them.

Open-source models play a critical role here. Most of the world’s models are free. Researchers, startups, enterprises, and entire nations rely on open models to participate in advanced AI. When open models reach the frontier, they do not just change software. They activate demand across the entire stack.

DeepSeek-R1 was a powerful example of this. By making a strong reasoning model widely available, it accelerated adoption at the application layer and increased demand for training, infrastructure, chips, and energy beneath it.

What This Means

When you see AI as essential infrastructure, the implications become clear.

AI starts with a transformer LLM. But it’s much more. It is an industrial transformation that reshapes how energy is produced and consumed, how factories are built, how work is organized, and how economies grow.

AI factories are being built because intelligence is now generated in real time. Chips are being redesigned because efficiency determines how fast intelligence can scale. Energy becomes central because it sets the ceiling on how much intelligence can be produced at all. Applications accelerate because the models beneath them have crossed a threshold where they are finally useful at scale.

Every layer reinforces the others.

This is why the buildout is so large. This is why it touches so many industries at once. And this is why it will not be confined to a single country or a single sector. Every company will use AI. Every nation will build it.

We are still early. Much of the infrastructure does not yet exist. Much of the workforce has not yet been trained. Much of the opportunity has not yet been realized.

But the direction is clear.

AI is becoming the foundational infrastructure of the modern world. And the choices we make now, how fast we build, how broadly we participate, and how responsibly we deploy it, will shape what this era becomes.

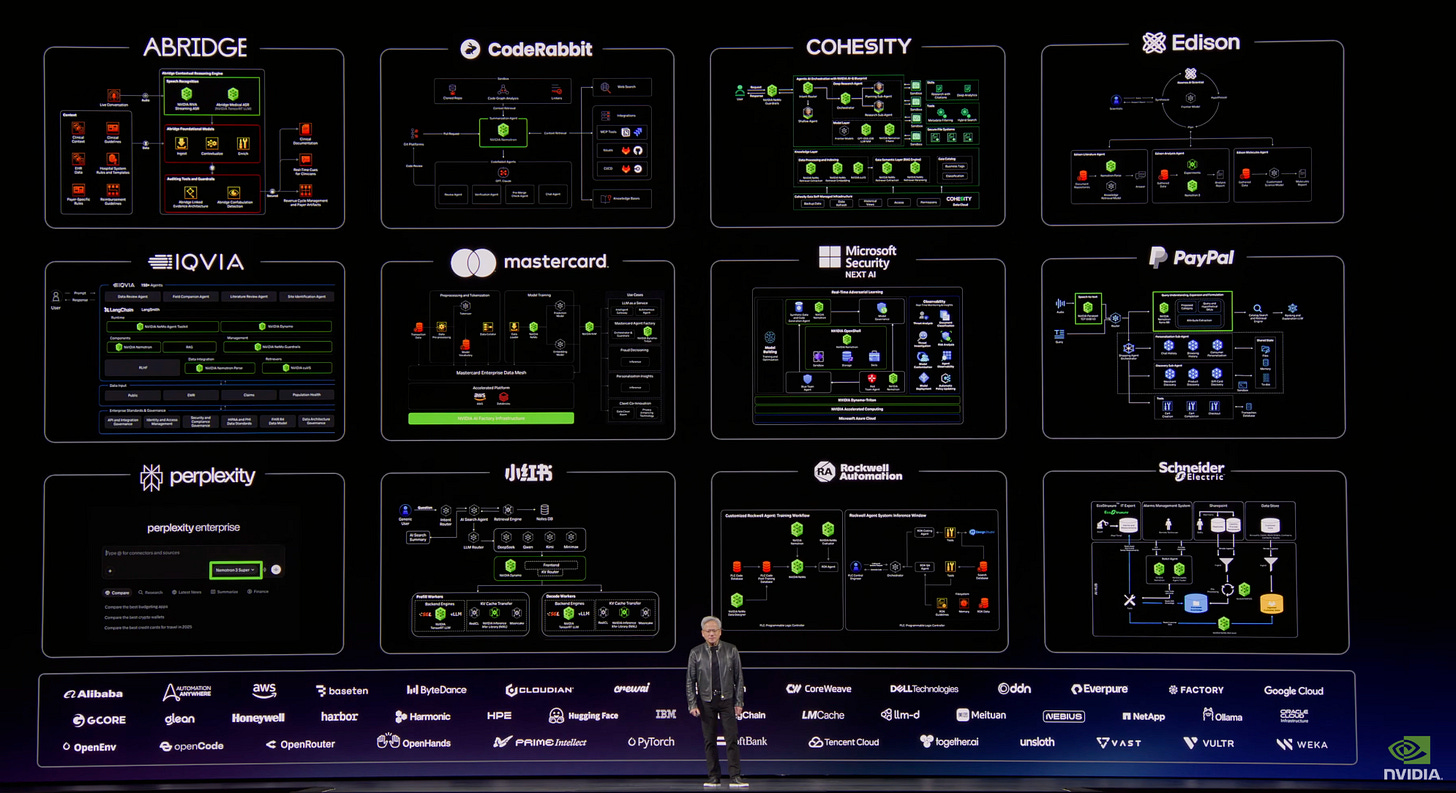

I think the main thing we are seeing this year from NVIDIA is that they are no longer going to sit still at the application layer. While previously they were more interested in playing with a hyperscaler angle (which didn’t go anywhere) or with scaling their dedicated manufacturing software (Omniverse), this is the first time that we are seeing more direct interest in the Enterprise application layer for regular companies.

Agents are for obvious reasons an extremely lucrative opportunity for the company, due to significantly driving up token usage. With OpenAI still slowly repositioning toward that market and Anthropic being only interested in driving vertical integration for its own tools (which mostly run on Google infrastructure), it’s not surprising to see NVIDIA making a push in that direction.

The most peculiar thing is that regardless of how this plays out over the year, I wouldn’t be surprised if we see very little material difference in terms of market cap. Jensen has decided to address this as well, by returning 50% of NVIDIA’s free cash flow to shareholders through buybacks and dividends.

And this is how the most valuable company in the world became a...dividend compounder.