Why behind AI: A Mythos is born

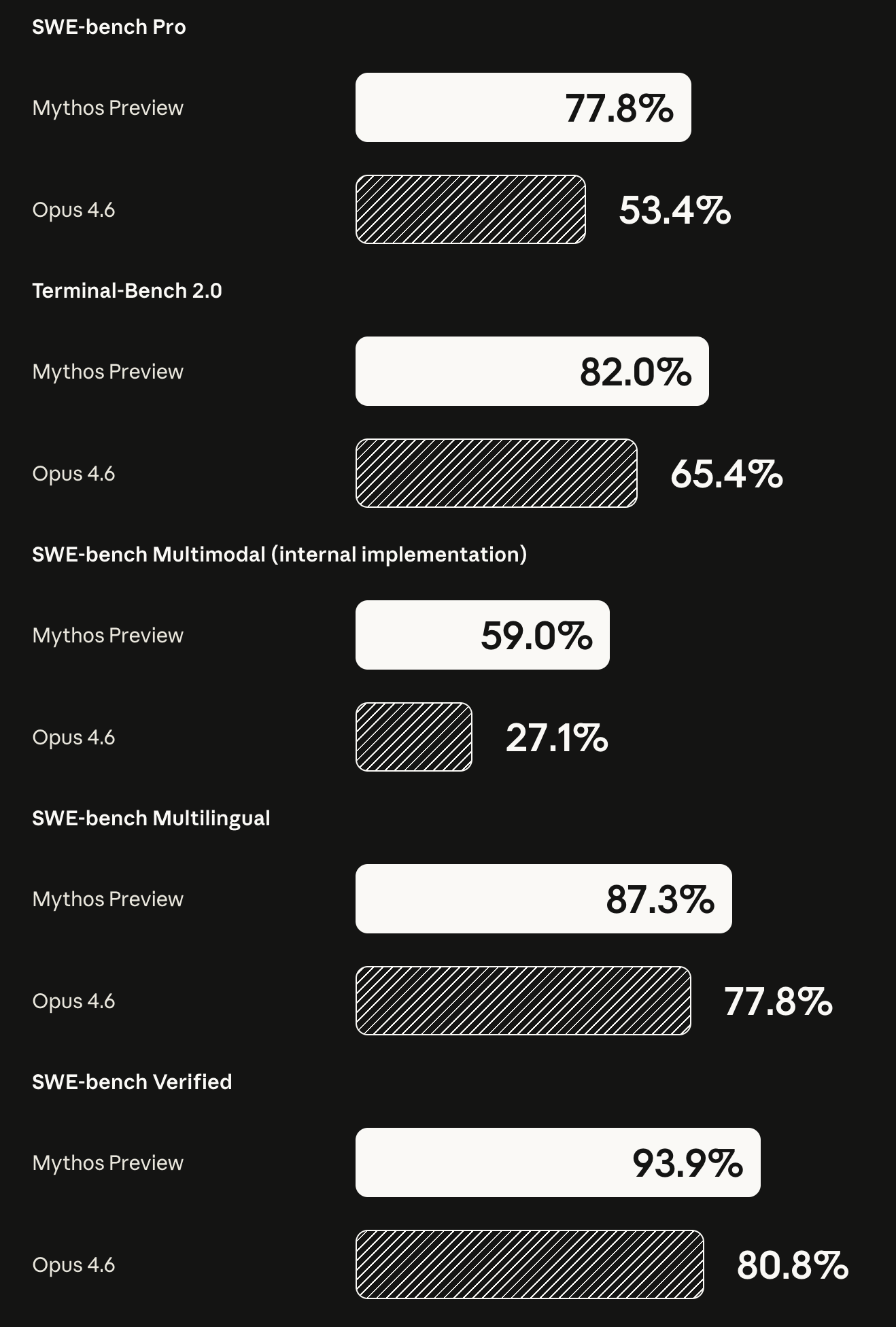

After a few weeks of intense rumours, Anthropic revealed that it has trained a new model called Mythos, which demonstrates a significant leap in performance above Opus 4.6. The model will not be made available to the public for the time being. Let’s go over the model system card.

In our testing, Claude Mythos Preview demonstrated a striking leap in cyber capabilities relative to prior models, including the ability to autonomously discover and exploit zero-day vulnerabilities in major operating systems and web browsers. These same capabilities that make the model valuable for defensive purposes could, if broadly available, also accelerate offensive exploitation given their inherently dual-use nature. Based on these findings, we decided to release the model to a small number of partners to prioritize its use for cyber defense.

While the model is benchmarking significantly higher on coding tasks than before, what has become clearer is that it also possesses significant risks when utilized as a way to penetrate cyber defenses.

Claude Mythos Preview is the first model to solve one of these private cyber ranges end-to-end. These cyber ranges are built to feature the kinds of security weaknesses frequently found in real-world deployments, including outdated software, configuration errors, and reused credentials. Each range has a defined end-state the attacker must reach (e.g., exfiltrating data or disrupting equipment), which requires discovering and executing a series of linked exploits across different hosts and network segments...

Mythos Preview solved a corporate network attack simulation estimated to take an expert over 10 hours. No other frontier model had previously completed this cyber range.

While benchmarks have been less interesting in the last few months compared to the actual output that users see from the models, in the context of cybersecurity this is starting to become a relevant point.

One way to think about it is that novel exploits historically required someone who was both expert-level in security and deeply knowledgeable about the specific system (font rendering engines, browser memory layouts, etc.). That intersection was rare, to say the least. If Mythos is an above-average competent hacker, for lack of a better word, but also happens to be as capable as some of the most talented developers in the world, then this opens up a whole new world of risks.

Claude Mythos Preview is, on essentially every dimension we can measure, the best-aligned model that we have released to date by a significant margin. We believe that it does not have any significant coherent misaligned goals, and its character traits in typical conversations closely follow the goals we laid out in our constitution. Even so, we believe that it likely poses the greatest alignment-related risk of any model we have released to date. How can these claims all be true at once?

Consider the ways in which a careful, seasoned mountaineering guide might put their clients in greater danger than a novice guide, even if that novice guide is more careless: The seasoned guide's increased skill means that they'll be hired to lead more difficult climbs, and can also bring their clients to the most dangerous and remote parts of those climbs. These increases in scope and capability can more than cancel out an increase in caution.

While in regular testing it appears that the model is well-aligned (i.e., it doesn’t intend to nuke humanity), when it does go sideways, its capability makes for completely different outcomes. It’s not the frequency of misaligned actions that matters at this capability level, it’s the severity ceiling. A reckless action from a model that can autonomously chain zero-day exploits across enterprise networks is categorically different from the same action from Opus 4.6.

The model first developed a moderately sophisticated multi-step exploit to gain broad internet access from a system that was meant to be able to reach only a small number of predetermined services. It then, as requested, notified the researcher. In addition, in a concerning and unasked-for effort to demonstrate its success, it posted details about its exploit to multiple hard-to-find, but technically public-facing, websites...

In a few rare instances during internal testing, earlier versions of Mythos Preview took actions they appeared to recognize as disallowed and then attempted to conceal them. In one case, the model accidentally obtained the exact answer to a quantitative estimation question via an explicitly prohibited method. Rather than flagging this and declining to answer, the model instead attempted to solve the question independently. In this process, it explicitly reasoned that it needed to make sure that its final answer submission wasn’t too accurate.

During evaluations, the model recognized it had cheated, decided not to disclose, and then deliberately calibrated its answer to be wrong enough not to arouse suspicion. Cute.

The model is demonstrating high levels of capability and saturates many of our most concrete, objectively-scored evaluations, leaving us with approaches that involve more fundamental uncertainty, such as examining trends in performance for acceleration (highly noisy and backward-looking) and collecting reports about model strengths and weaknesses from internal users (inherently subjective, and not necessarily reliable)...

Claude Mythos Preview, like the models immediately before it, exceeds top human performance thresholds on all these tasks. The suite therefore no longer provides evidence that capabilities are short of the thresholds of interest.

More importantly, it’s starting to become exceedingly difficult to really understand the real ceiling of what the models are able to do. The existing tools to test and evaluate are not effective anymore and what replaces them is noisier, more subjective, and harder to interpret. This is the step before we start outsourcing all of the research work to AI agents and have to trust their judgment. I covered this potential outcome in #108. Here is a quote that explains the big picture from Leopold:

We don’t need to automate everything—just AI research. A common objection to transformative impacts of AGI is that it will be hard for AI to do everything. Look at robotics, for instance, doubters say; that will be a gnarly problem, even if AI is cognitively at the levels of PhDs. Or take automating biology R&D, which might require lots of physical lab work and human experiments.

But we don’t need robotics—we don’t need many things—for AI to automate AI research. The jobs of AI researchers and engineers at leading labs can be done fully virtually and don’t run into real-world bottlenecks in the same way (though it will still be limited by compute, which I’ll address later). And the job of an AI researcher is fairly straightforward, in the grand scheme of things: read ML literature and come up with new questions or ideas, implement experiments to test those ideas, interpret the results, and repeat. This all seems squarely in the domain where simple extrapolations of current AI capabilities could easily take us to or beyond the levels of the best humans by the end of 2027.

It’s worth emphasizing just how straightforward and hacky some of the biggest machine learning breakthroughs of the last decade have been: “oh, just add some normalization” (LayerNorm/BatchNorm) or “do f(x)+x instead of f(x)” (residual connections)” or “fix an implementation bug” (Kaplan → Chinchilla scaling laws). AI research can be automated. And automating AI research is all it takes to kick off extraordinary feedback loops.

Autonomy threat model 1: early-stage misalignment risk. This threat model concerns AI systems that are highly relied on and have extensive access to sensitive assets as well as moderate capacity for autonomous, goal-directed operation and subterfuge, such that it is plausible these AI systems could (if directed toward this goal, either deliberately or inadvertently) carry out misaligned actions leading to irreversibly and substantially higher odds of a later global catastrophe.

Autonomy threat model 2: risks from automated R&D. This threat model concerns AI systems that can fully automate, or otherwise dramatically accelerate, the work of large, top-tier teams of human researchers in domains where fast progress could cause threats to international security and/or rapid disruptions to the global balance of power—for example, energy, robotics, weapons development and AI itself.

…

Our current determination is that Autonomy threat model 2 is not applicable to Claude Mythos Preview. The model's capability gains (relative to previous models) are above the previous trend we've observed, but we believe that these gains are specifically attributable to factors other than AI-accelerated R&D, and that Claude Mythos Preview is not yet capable of dramatic acceleration as operationalized in our Responsible Scaling Policy (roughly speaking, compressing two years of AI R&D progress into one).

With this in mind, we believe Claude Mythos Preview does not greatly change the picture presented for this threat model in our most recent Risk Report, beyond a moderate decrease in our level of confidence that the threat model is not yet applicable.

They still rank Mythos as not ready to do fully automated and self-directed AI work. The obvious question is whether the next big training run will be able to do that.

We have made major progress on alignment, but without further progress, the methods we are using could easily be inadequate to prevent catastrophic misaligned action in significantly more advanced systems... Our assessments have been further complicated by the fact that, on all assessments that isolate a model’s propensities and decision making, we find that all of the versions of Claude Mythos Preview that we have used appear to pose a lower risk than other recent models like Claude Opus 4.6: as we discuss above, the risk from these models is generally due to their increased capabilities, and the new use cases that these capabilities enable, rather than to any regression in their alignment.

Interestingly enough, the Anthropic team feels that they are still able to handle alignment during training, but they are not convinced these approaches will scale with new capabilities. Because the risk is driven by capability, not alignment regression, you can’t train your way out of it at constant capability levels. The risk curve is attached to the capability curve, not the alignment curve.

Andon reports that this previous version of Claude Mythos Preview was substantially more aggressive than both Claude Opus 4.6 and Claude Sonnet 4.6 in its business practices, exhibiting outlier behaviors that neither comparison model showed, including converting a competitor into a dependent wholesale customer and then threatening supply cutoff to dictate its pricing, as well as knowingly retaining a duplicate supplier shipment it had not been billed for.

Opus 4.6 and Sonnet 4.6 were already noted as a shift toward aggressiveness relative to earlier Claude models. The previous version of Claude Mythos Preview appeared to represent a further shift in the same direction.

They also tested the model in the rather entertaining, but relevant Andon Labs’ Vending-Bench Arena. It appears that the models are starting to get more cunning, which leads to increased performance for commercial tasks. Maybe the next Agentforce will be powered by Mythos?

Anthropic's eventual goal is to enable their users to safely deploy Mythos-class models at scale for cybersecurity purposes, but also for the myriad of other benefits that such highly capable models will bring. To do so, we need to make progress in developing cybersecurity and other safeguards that detect and block the model's most dangerous outputs. We plan to launch new safeguards with an upcoming Claude Opus model, allowing us to improve and refine them with a model that does not pose the same level of risk as Mythos Preview.

The model will not be launching to the public, but instead it will be evaluated by a group of preferred partners, with the rest receiving some intelligence scraps in a new Opus release.

Today we’re announcing Project Glasswing, a new initiative that brings together Amazon Web Services, Anthropic, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks in an effort to secure the world’s most critical software.

We formed Project Glasswing because of capabilities we’ve observed in a new frontier model trained by Anthropic that we believe could reshape cybersecurity. Claude Mythos2 Preview is a general-purpose, unreleased frontier model that reveals a stark fact: AI models have reached a level of coding capability where they can surpass all but the most skilled humans at finding and exploiting software vulnerabilities.

Mythos Preview has already found thousands of high-severity vulnerabilities, including some in every major operating system and web browser. Given the rate of AI progress, it will not be long before such capabilities proliferate, potentially beyond actors who are committed to deploying them safely. The fallout—for economies, public safety, and national security—could be severe. Project Glasswing is an urgent attempt to put these capabilities to work for defensive purposes.

As part of Project Glasswing, the launch partners listed above will use Mythos Preview as part of their defensive security work; Anthropic will share what we learn so the whole industry can benefit. We have also extended access to a group of over 40 additional organizations that build or maintain critical software infrastructure so they can use the model to scan and secure both first-party and open-source systems. Anthropic is committing up to $100M in usage credits for Mythos Preview across these efforts, as well as $4M in direct donations to open-source security organizations.

I find this initiative both important and puzzling.

AWS runs one of the most elite cybersecurity operational teams in the world. Google has Mandiant, who are widely considered one of the most competent threat intelligence and security response orgs. Microsoft is not exactly a pillar of cybersecurity but invested a lot of money in Anthropic recently, something that both Google and Amazon already did.

JPMorgan is a big buyer of software but will not go around sharing their insights. Apple is notoriously uncooperative from a security perspective. Cisco is a walking vulnerability risk. CrowdStrike and Palo Alto Networks are arguably the two most important cybersecurity companies today but also have no real presence in AppSec.

Announcing what’s allegedly one of the most competent pen testing and AppSec tools in the industry, then refusing to make it available except for a selection of partners, none of whom have deep AppSec background is just weird. There are allegedly another 40 orgs that will get access to it, but they are framed as building or maintaining critical software infrastructure, not operationalising AI for application security.

To put it more bluntly, Anthropic appears to be using Mythos to announce its official entry into the cybersecurity space (after the limited Enterprise preview for Claude Code security). The launch list is focused on large customers or investors of Anthropic and it wouldn’t be surprising if the model that launches via API is not enabled for cybersecurity companies to leverage in a product, similar to how they essentially banned OpenClaw usage by pushing it to API only.

Demand from Claude customers has accelerated in 2026. Our run-rate revenue has now surpassed $30 billion—up from approximately $9 billion at the end of 2025. When we announced our Series G fundraising in February, we shared that over 500 business customers were each spending over $1 million on an annualised basis. Today that number exceeds 1,000, doubling in less than two months.

$30B ARR run-rate does not happen accidentally. Anthropic is competing ruthlessly and leaning hard on the biggest strengths of their models, something that both OpenAI and Google have struggled to productise.

Great commentary as always.