Infra Play #142: AI hyperscaling is hard work

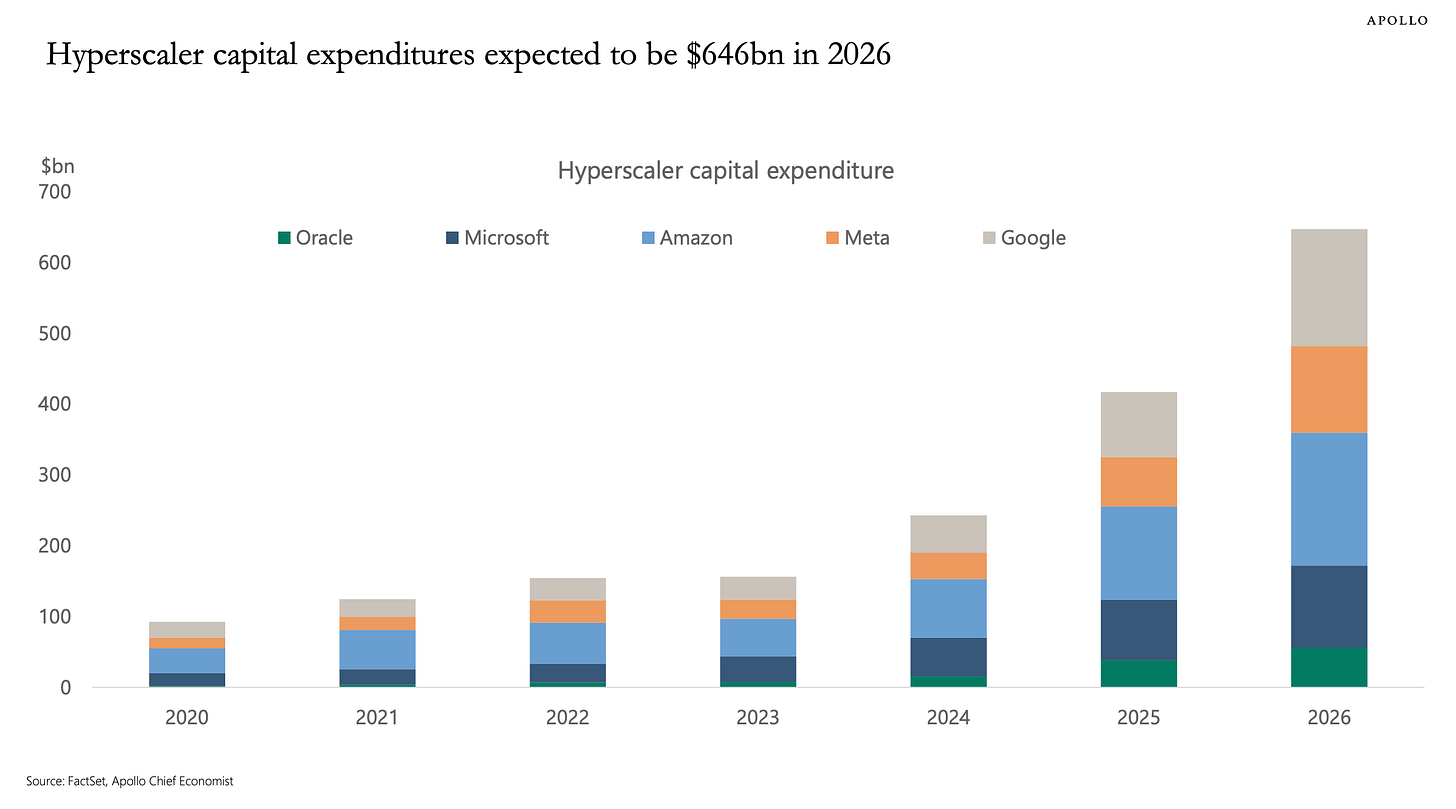

I've been tracking hyperscaler earnings since the AI infrastructure buildout started in 2024, and this week was easily the highest-stakes moment of the race so far. The market needed two key pieces of information: their CapEx plans to add more hardware and whether sales performance justified the investment.

Well, we got our answer and the short summary is that there are no breaks on the AI train right now.

GCP

Sundar Pichai, CEO, Alphabet and Google: Hi, everyone, and thanks for joining us today. It was a terrific quarter for Alphabet. Our momentum was on full display at Cloud Next last week, and the month of May brings even more with I/O, Brandcast and GML. I hope you’ll tune in to see our progress.

It’s clear that our AI investments and full stack approach are driving performance across our business.

In Search & Other, revenue grew 19%. People love our AI experiences like AI Mode and AI Overviews, and they’re coming back to Search more.

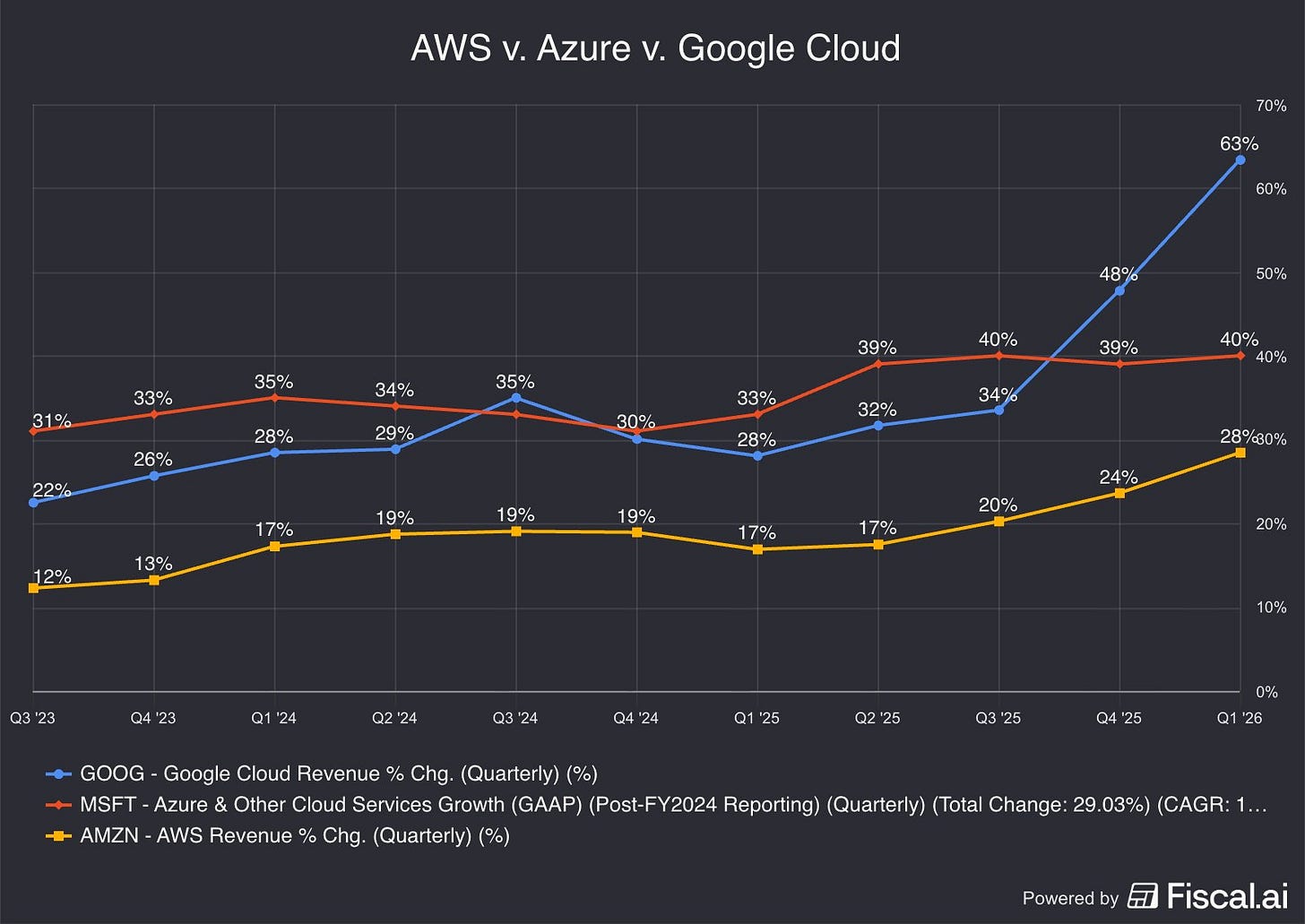

Cloud accelerated again this quarter due to strong demand for our AI products and infrastructure. Revenue grew 63%, exceeding $20 billion for the first time, and our backlog nearly doubled quarter on quarter to over $460 billion. Gemini Enterprise is seeing tremendous momentum, with 40% growth quarter over quarter in paid monthly active users.

In Subscriptions, this was our strongest quarter ever for our consumer AI plans, primarily driven by adoption of the Gemini App. Overall, the number of paid subscriptions has now reached 350 million, with YouTube and Google One being the key drivers.

And our AI models have great momentum. Our first party models now process more than 16 billion tokens per minute via direct API use by our customers, up from 10 billion last quarter.

After two years of struggling to meaningfully accelerate growth, GCP has had an outstanding six months.

Sundar Pichai: Starting with our AI infrastructure. It’s the foundation of our full stack approach to AI, driving customer growth and product adoption.

Our custom TPUs, Axion CPUs, and the latest NVIDIA GPUs continue to form the industry’s widest variety of compute options.NVIDIA GPUs are a core part of our AI accelerator portfolio, and we will be among the first to offer NVIDIA Vera Rubin NVL72, in addition to the Blackwell- and Hopper-based instances already available.

At Cloud Next, we introduced our eighth generation TPUs, individually specialized for training and serving, and able to take on the most demanding agentic workloads.

TPU 8t provides high performance model training with three times the processing power of Ironwood and two times the performance.

TPU 8i delivers cost effective, low latency inference, with 80% better performance per dollar than the prior generation.

This exceptional infrastructure powers our world class AI research. That includes models and tooling, which continue to progress really well.

While there are meaningful concerns about how TPUs will remain competitive, particularly if Google shifts production away from Broadcom, every accelerated computing rack is worth its weight in gold at a time when Nvidia accelerators remain at full capacity and premium pricing.

Sundar Pichai: Gemini 3.1 Pro continues to push the frontier in reasoning, multimodal understanding and cost. We’ve quickly expanded the Gemini 3.1 series of models to offer more choices for developers, including our cost efficient Flash models.

3.1 Flash Live, our latest audio model, has improved precision and reasoning, making voice interactions more natural and intuitive. It’s now powering conversational features in Search and the Gemini app. Speech to text is now available in 70 languages.

And with 3.1 Pro, our Deep Research agent got a big upgrade, including MCP support and native visualizations.

Our generative media models are incredibly popular. Lyria 3 has generated over 150 million songs since launching on the Gemini App.

Nano Banana 2 reached one billion images in nearly half the time of Nano Banana 1.

And Veo 3.1 Lite is our most cost efficient video model to date.

On top of this, we launched Gemma 4, our most intelligent open model. It’s been downloaded over 50 million times in just a few weeks. In fact, our open models have now been downloaded over 500 million times.

On the model side, Google has clearly taken a step back in the eyes of the "AI power user," as Anthropic and OpenAI continue to trade blows over the best coding model in the world. Still, Google has optimized for a slightly different game, using Gemini for easy API integrations and more straightforward agentic use cases like customer support. Between efficient models, their own silicon, and aggressive pricing, Gemini has clearly found enterprise product-market fit.

Sundar Pichai: Looking ahead, we are focused on pushing the next frontiers of foundation models, including intelligence, agents and agentic coding. And we are using the latest technologies to transform how we work as a company.

For example, with Antigravity, we are shifting to truly agentic workflows. Our engineers are now orchestrating fully autonomous digital task forces, and building at a faster velocity. Much more to come here.

Nobody believes in Antigravity right now except Sergey Brin.

Sundar Pichai: We continue to invest in improvements to AI Overviews, which are driving overall Search growth, and we are also seeing strong growth in both users and usage of AI Mode globally.

Personal Intelligence expanded broadly in the U.S. and we are seeing people ask more personal questions, and getting responses that are uniquely relevant to them.

We also shipped agentic experiences, like restaurant booking, to new countries and new multimodal capabilities like Search Live globally.

We’re also continuing to improve efficiency and speed. Even as we have brought new AI features into our results page, we have reduced Search latency by more than 35% over the past five years.

And since upgrading AI Overviews and AI Mode to Gemini 3, we have reduced the cost of core AI responses by more than 30%, thanks to continued hardware and engineering breakthroughs.

AI Overviews in Google Search, however, remain probably the most powerful "free AI" usage in the industry right now. It has mostly killed the value proposition of dedicated tools like Perplexity, since most quick searches that benefit from AI summaries already work perfectly out of the box with AI Overviews. Whether this drives meaningful commercial conversion through advertisements or subscriptions is a separate question.

Sundar Pichai: Google Cloud is differentiated because we are the only provider to offer first party solutions across the entire Enterprise AI stack. Our growth in revenue, operating margin, and backlog highlights this differentiation. Our Enterprise AI solutions have become our primary growth driver for Cloud for the first time. In Q1, revenue from products built on our gen AI models grew nearly 800% year over year.

We are winning new customers faster, with new customer acquisition doubling compared to the same period last year.

We are seeing strong deal momentum, doubling the number of $100 million to $1 billion deals year on year, and signing multiple billion dollar plus deals. And we are deepening relationships with existing customers. Customers outpaced their initial commitments by 45%, accelerating over last quarter.

At Cloud Next last week, we introduced hundreds of new capabilities across our vertically optimized AI stack that are designed to work together for our enterprise customers.

Coming back to GCP, we are seeing a number of strong signals, including new logo acceleration alongside more large deals. At hyperscaler scale, a big-ticket deal measures in the billions.

Sundar Pichai: We introduced a new Gemini Enterprise Agent Platform that empowers users to build, orchestrate, govern and optimize agents with the controls that enterprise customers need.

Along with new capabilities in Gemini Enterprise app — like Projects, Canvas, Long Running Agents and Skills, every employee can build agents.

In Q1, Gemini Enterprise paid monthly active users grew 40% quarter over quarter. That includes major global brands like Bosch, Citi Wealth, Merck, and Mars, Incorporated.

Our partner ecosystem plays an increasingly critical role in driving Gemini Enterprise adoption. We saw 9x year over year growth both in seats sold with partners, and in the number of partners adopting it for internal use.

This momentum is leading to accelerating usage of our models.

Which brings us to the actual adoption momentum, with large companies still trying to figure out how to scale AI agents for internal use cases while juggling difficult internal budgets. I am directly involved in one of the accounts mentioned here, and I have observed this play out firsthand.

Sundar Pichai: Over the past 12 months, 330 Google Cloud customers each processed over one trillion tokens. 35 reached the ten trillion token milestone.

To give agents business context from enterprise data to help them reason intelligently, we introduced a new Agentic Data Cloud. It includes a cross cloud Lakehouse, Knowledge Catalog and Deep Research Agents, which combine research and analytical skills.

As an example, using our data cloud, American Express is enabling agentic commerce at scale by moving an enterprise data platform, along with hundreds of production applications, to BigQuery. Vodafone is proactively resolving outages, automating network planning and precisely targeting capacity.

Enterprise data has become critical for agents to reason. Our strength with Big Query and Gemini Enterprise has led Gemini powered workflows in Big Query to grow over 30X year over year.

The enterprise AI upsell is, of course, not limited to Gemini inference; it extends across the full technology stack around those applications. BigQuery has been a significant upsell for GCP reps, but I don't know how "sticky, sticky" it will be long term. New players like ClickHouse have been winning workloads by offering better performance at half the price, and that's not something cost-sensitive engineering teams will ignore.

Sundar Pichai: As cybersecurity threats from the use of AI models accelerate, our expertise in AI and Cybersecurity is driving strong demand for our Agentic Defense offerings. In March, we closed the acquisition of Wiz, a leading cloud and security AI platform, which is an incredible fit for the moment we are in. We’ve seen tremendous interest from customers in our unique cybersecurity and AI products and services to protect their IT estate. The performance of Wiz so far has exceeded our expectations.

Together with Google’s Threat Intelligence, Security Operations and AI models, Wiz is helping organizations detect, prevent and respond to threats.

We introduced new Gemini powered agents for threat detection, continuous red teaming, and automated remediation to protect software code and Cloud systems

Customers like Deloitte, Priceline and Shell are using our agentic defense to strengthen their security posture. All of this is powered by the AI Infrastructure I mentioned earlier.

Interestingly, cybersecurity makes a return to an Alphabet earnings call, with a high-level blurb but no meaningful adoption figures. I still think the GCP team doesn't know how to drive proper security deals, and unless something changes, this will remain an underperforming part of the portfolio, regardless of the strong fundamentals.

Michael Nathanson (MoffettNathanson): Thanks. One for Sundar, one for Philipp.

Sundar, if I can connect Brian’s question and Eric’s question and go a little bit higher, I want to understand how are you deciding, how are you allocating which divisions and projects get excess capacity, even though you’re constrained? How do you decide between all the internal projects you have and the external projects? What types of screens are you running to decide who gets the ample capacity?

Then for Philipp, I noticed that you said this on the Gemini app, there’s more and more images that come to you in the shopping journey. Can you talk about your thoughts about adding advertising on that app and what’s guiding your decision making here on adding ads on Gemini? Thanks.

Sundar Pichai, CEO, Alphabet and Google: Thanks, Michael. I think, great question on an ongoing basis, and I’m looking forward to Gemini helping me more and more as I’m thinking that through.

Look, I do think that the foundation where we start with it is, what do we need from a R&D standpoint to develop models at the frontier. So what do you need for training these models? And so, effectively, the compute needed for GDM, because it’s a foundation for everything we do. So that’s a core principle with which we operate.

Then obviously, the ability to plan ahead. We do long range plans on our core areas, be it Search, be it YouTube, and so on, as well as what we see in Google Cloud.

And obviously, in Google Cloud, we are providing enterprise AI solutions, which this quarter had an 800% year on year increase from the prior year. So we’re seeing strong demand for Gemini enterprise, our AI solutions there.

We see strong demand for infrastructure in Google Cloud. And as I said earlier, in certain cases, we are seeing demand for TPU hardware; TPU hardware in other data centers as well.

So we are modeling these out and working to allocate across these areas. Obviously, we are compute constrained in the near term. And as an example, our Cloud revenue would have been higher if we were able to meet the demand.

So we are working through that moment and we are investing, but we have a robust long range planning framework, and we see extraordinary opportunities ahead, and we are allocating with that framework in mind.

Philipp Schindler, SVP and CBO, Google: And to the second part of your question, as I said in my previous answer, we are obviously focused on the user first and creating a really great user experience with all of our product, especially on newer products; and specifically on monetization in the Gemini app, our focus right now is on AI Mode.

But it’s fair to say that we really believe the format that works well in AI Mode would transfer successfully to Gemini app. And so, today in the Gemini app, we’re focused on the free tier and subscriptions and our AI plans were a sizable contributor to our Google One revenue growth.

But let’s also be clear, Ads have always been a big part of scaling products to reach billions of people, and if done well, Ads can be really valuable and really helpful commercial information. At the right moment, we’ll share any plans, as we have said, but we’re not rushing anything here.

It's important to remember that GCP has historically been a largely ignored team inside Alphabet, with most of the leadership coming out of the Ads business. The beauty of sales performance is that at some point they can no longer ignore you, especially when the commercial teams are delivering those large commits with significant compute reserved for customers.

Justin Post (BAML): Thank you for taking my question. I expect a lot of interest in your TPU sales. Can you help us think about how you’re thinking about the opportunity there? And then maybe break down the backlog growth a little bit between TPUs and Cloud.

And then the second question. Just thinking about the margins on these big, generative AI Cloud deals, how do you think about these $100 billion deals coming in and the margins associated with those? Can they be similar to your Cloud business as it is? Thank you.

Sundar Pichai, CEO, Alphabet and Google: Look, overall, I would say we see tremendous interest and there’s tremendous demand for both AI solutions, as well as AI infrastructure, including massive interest in our GPU offerings, as well as our TPUs. So we are proud that we can provide customers with the breadth of our offerings and meet them in terms of where their needs are.

And maybe I’ll pass it to Anat to give some color on the backlog growth.

Anat Ashkenazi, SVP and CFO, Alphabet and Google: So the backlog, the TPU hardware agreements that Sundar referenced in his prepared remarks are reflected in our Cloud backlog, the $462 billion, although the majority of the backlog is still GCP agreements.

Now, as you think about the total backlog, just over half of it will convert to revenue in the next 24 months. And the TPU hardware sales, more specifically, we expect a small percent of them to see coming through as revenue later this year, and then the majority to be realized as revenue in 2027.

In the next two years, GCP will be recognizing $19B of revenue every month even if they don't sell a single new deal (which they seem to be adding to aggressively). We call this world-class in this business, shades of the glory days of Azure. This is not too surprising, as the company recruited a lot of sales leadership from Microsoft, which has brought both benefits (higher expectations) and drawbacks (quality of life and very aggressive growth targets).

The market has mostly rewarded them for this level of performance, and it's not difficult to see why the team is bullish on the next 24 months.