Infra Play #125: Anthropic

What happens when you build around missionaries rather than mercenaries?

In my “Best of 2025” article I called out Anthropic for the most impressive pivot last year in cloud infrastructure software:

Speaking of dominating token usage, holy schmoly, what a year for Anthropic. After a difficult 2024, where their newly hired sales team barely got to $1B ARR after a significant push from the hyperscalers, Claude Code, Sonnet 4.1, and Opus 4.5 became the strongest product-market fit in Enterprise AI, rivaling the consumer hold that ChatGPT has. Projected to finish at $9B ARR, Claude has firmly established itself as the most widely used LLM for coding, and its most recent release Opus 4.5 is seen as operating as a competent (if occasionally on the junior side) developer.

I've personally always had a preference for Claude as a primary LLM over ChatGPT, but I found the product inconsistent (due to a variety of challenges, many to do with the weak primary user surface, i.e. the website and app). As such, at the end of 2024 it was not difficult to see the company fall behind and get acquired by AWS, who seemed the most interested party in working with them and pushing Sonnet as the LLM of choice in AWS Bedrock.

When the "war for research talent" played out earlier in the year, it was also rather obvious why that would be an existential threat for them and they could see some of their best talent take the ridiculous packages that Meta was flaunting.

Instead, something peculiar happened.

The key takeaway

For tech sales and industry operators: Anthropic is the definite optimist's bet in a market full of indefinite pessimists hedging across multiple model providers. The company is building as if they'll win, not as if they need optionality. The contrarian insight is that their safety positioning, which most viewed as a commercial handicap, became their moat: enterprises trust Claude with sensitive workflows because Anthropic's entire brand is "we think about what could go wrong." The safety positioning would have remained a nice story without commercial proof, which Claude Code provided, creating agentic coding infrastructure as a category rather than competing in GitHub's "copilot" frame. Most of their sales motion requires intense partnering with their hyperscaler partners, AWS and GCP. The hyperscaler relationships create a negative customer acquisition cost, i.e. Amazon and Google are paying their own sales teams to distribute Claude through Bedrock and Vertex. The flipside is that hyperscaler dependency creates correlation risk; if AWS or Google decide to prioritise their own models (or maybe Grok 5), the distribution advantage inverts overnight.

For investors and founders: From an investor perspective, the macro setup for Anthropic's IPO is favourable: we're in a phase where public markets are hungry for AI exposure but sceptical of unprofitable companies, and Anthropic's unit economics outperformance positions them as the "quality" play in a sector full of "hopes and dreams" stories. The debt cycle dynamics are critical for founders to understand: Anthropic raised when capital was abundant, and their strong commercial performance means they can now access public markets before the cycle turns. The biggest founder lesson is that Anthropic's "safety-first" positioning was initially contrarian and is now becoming consensus in enterprise (helped by delivering some of the best frontier models in the industry). They were early to a market shift that made their perceived weakness into their primary differentiation. The obvious risks are correlation-related: Anthropic's outcomes are correlated with NVIDIA's execution (Blackwell infrastructure powering new model training), hyperscaler relationships (distribution), and the broader AI investment cycle. If any of these turn negative simultaneously, well, things might turn ugly.

Keep thinking

After a difficult 2024, the team kept pushing, with a focus on quality research and technical depth. This led to Claude Code, originally launched in Feb '25.

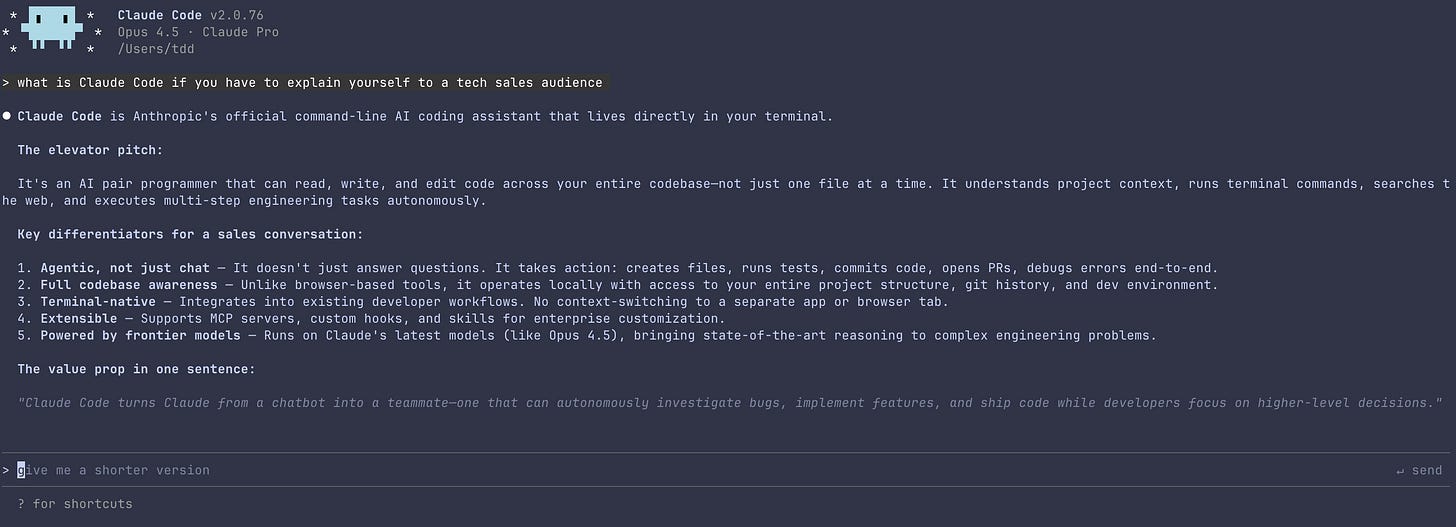

Claude Code itself is not a newly trained model or even a fine-tune of an existing one specifically for coding (GPT 5.2 Codex, for example, is a coding focused version of GPT 5.2). Instead, it's their own agentic harness, essentially a set of tools and rules around Claude that users can interact with in an agentic capacity. Claude Code is predominantly used in the command-line interface of your computer, although they recently launched a cloud version that's suitable for some enterprise use cases.

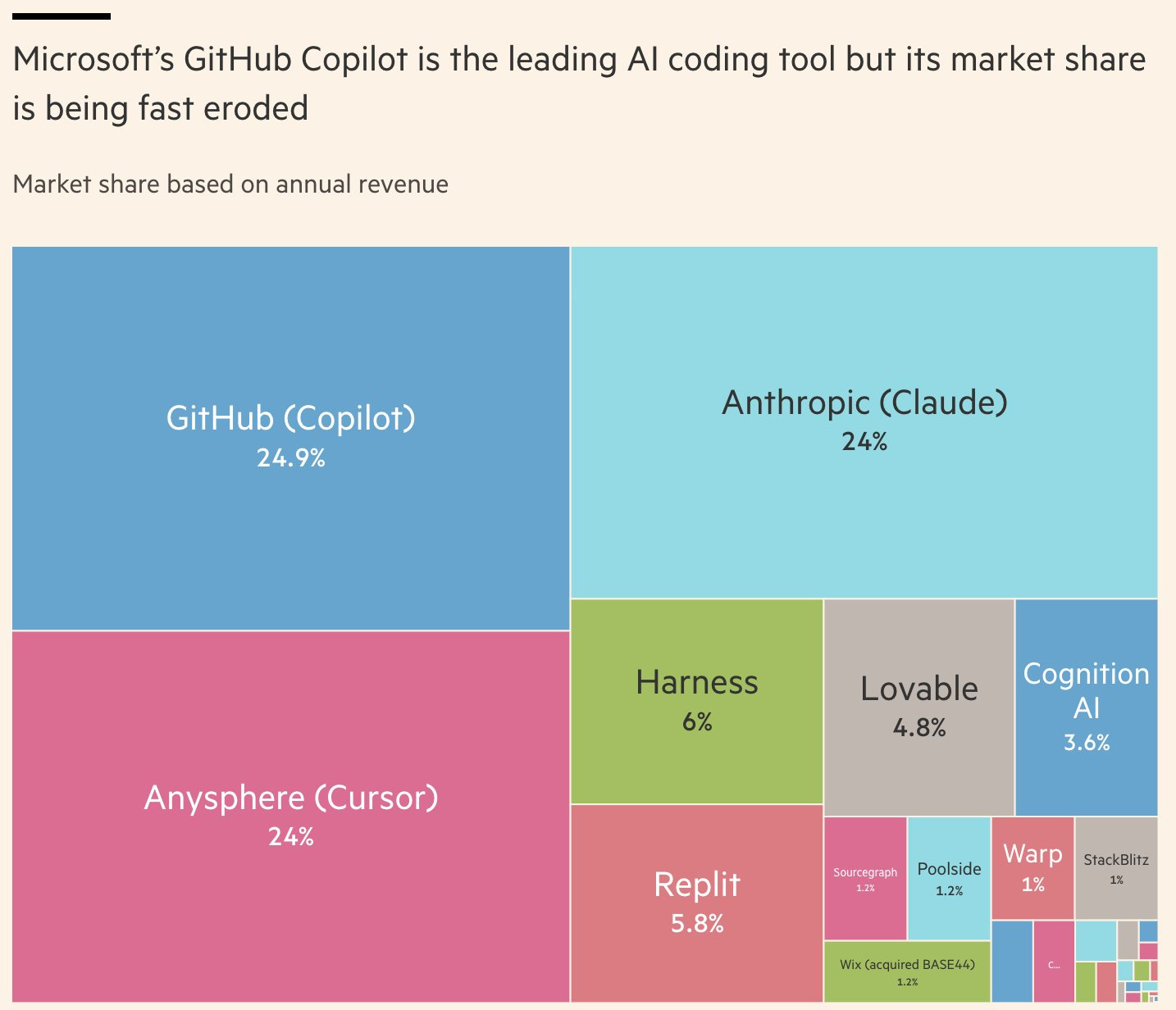

As of this writing, Claude Code itself as consumed via API tokens for their models has reached $1B ARR, making it essentially as successful as the standout winners so far of the agentic software market, GitHub Copilot and Cursor. The product has become so important for Anthropic that they even acquired Bun, the open source framework that Claude Code uses in order to run in CLI. This was a rather unusual acquisition (Bun makes no money and will remain open source) but it makes perfect sense from a developer experience perspective.

We are not here for the $1B of ARR, though. If it's not obvious by now, what Claude Code demonstrated was that Anthropic models were exceptional for tool calling when the right scaffolding was built around them. The majority of Cursor revenue is tied to Anthropic models' usage. Almost every tool in the image above pays at least a portion of their revenue to Anthropic.

This is what product-market fit looks like in a specific domain. Anthropic's models were consistently the most expensive, rarely benchmarked as number one; they often struggled to actually deliver their inference (it was almost always best to avoid the official API if you were building your product around the model), and brand recognition for "CLLLAAUAUUUDEEE" was almost zero at consumer and enterprise level. But if you put Sonnet 4.1 or nowadays Opus 4.5 in the right harness, Claude would code like you wouldn't believe.

But you don't get to this stage just because somebody figured out a slightly better interface for your product. There is a lot more to this story.